Behind the scenes: how we think about semantic model generation

Our feature specs generally have a back-story - an explanation of why we want to build this feature, right now. Tali, our VP of Product, shares the back-story we've used recently for one such case.

When we kicked off the work on the semantic model generation capability, where we can identify semantic models based on customer data and even export them into the leading interfaces (Snowflake Cortex, ChatGPT’s GPT mechanism, etc), we first invested the effort into deeply understanding the customer needs.

Below is what we came up with.

Market Problem

AI tools cannot deliver real value without AI-ready documentation and a reliable semantic layer.

The market is being flooded with AI-powered platforms, and data teams are under pressure - from leadership, vendors, and peers - to “leverage AI” in order to modernize their internal data processes and democratize insights for business stakeholders. However, the barrier is not the availability of AI tools; it’s the readiness of the underlying data.

When data teams attempt to adopt AI (whether through in-house solutions or external vendors), they must first prepare their data for AI consumption. This preparation typically involves:

Selecting the right assets – narrowing down to a meaningful, high-value subset of tables and metrics.

Cleaning the data – removing unused, temporary, or inaccurate tables and fields.

Documenting assets – translating schemas into business-ready, AI-friendly descriptions.

Building a semantic layer – defining relationships, joins, and metrics in a way AI can understand.

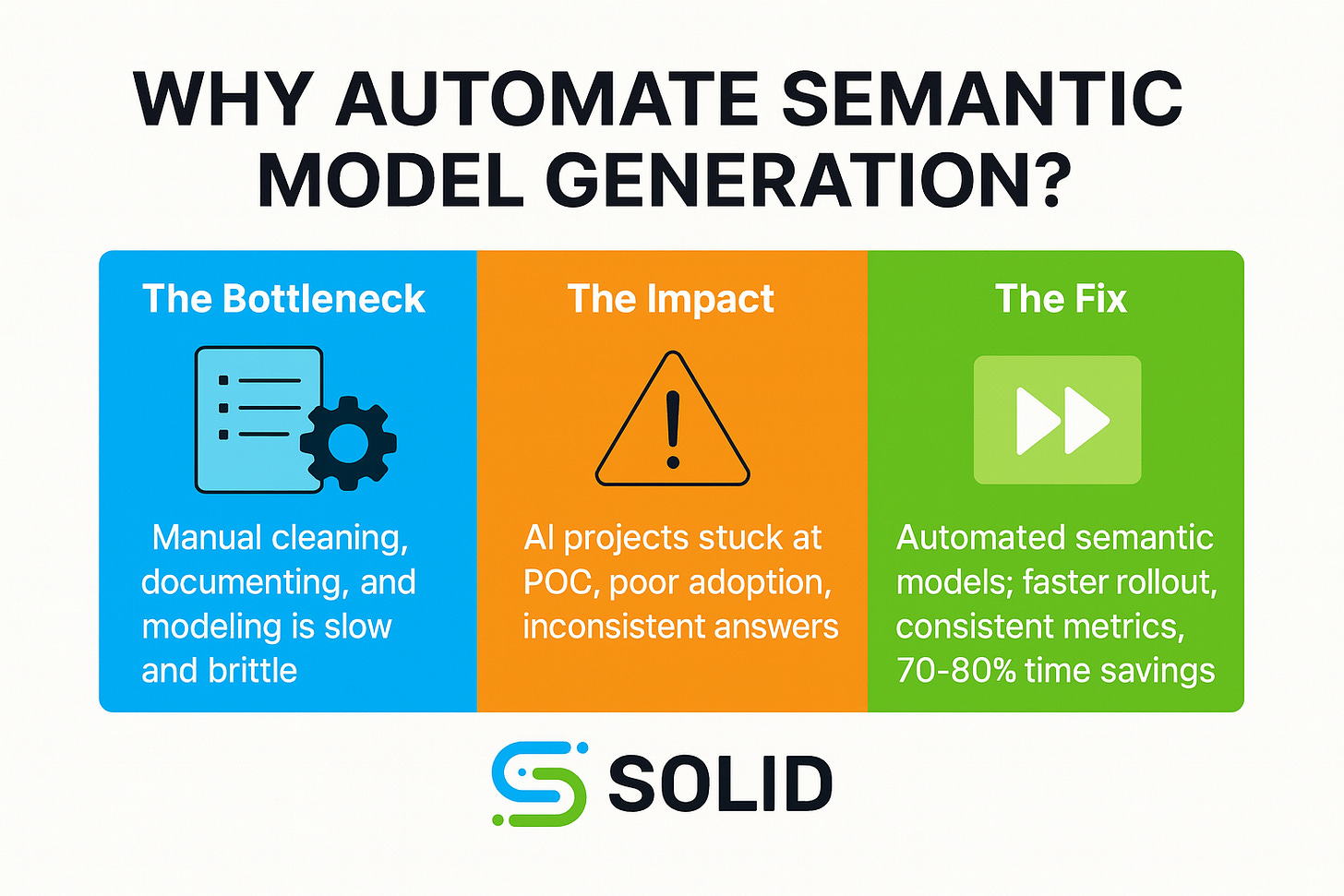

The challenge is that steps 2–4 are highly labor-intensive. Creating even a single semantic model manually takes significant analyst and data engineering effort. Maintaining these models, ensuring they remain accurate, relevant, and aligned with evolving business questions - adds another ongoing burden. As a result, most data and analytics teams can only afford to document and maintain a handful of critical semantic models, leaving large portions of their data estate underutilized for AI initiatives.

This creates several systemic problems:

Slow adoption of AI – because teams cannot operationalize AI without first overcoming documentation and modeling bottlenecks.

AI projects stuck at the POC stage. It is possible to do a limited POC on 20 tables, but you can’t scale it to the entire data stack.

Knowledge gaps and inconsistencies – as only select datasets are modeled, leaving many business questions unserved.

Ongoing maintenance burden – as data changes, manual semantic models quickly become outdated and unreliable.

There is therefore an urgent need for automation. Automatically generated semantic models can dramatically reduce the upfront and ongoing labor required, enabling teams to start leveraging AI tools immediately. This does not just save time - it also makes broader coverage feasible, ensuring more of the organization’s data is AI-ready.

Use cases

Alex is a Data Engineer. He wants to prepare the data before building an AI solution for text-to-insight for the sales teams, so he could start coding quickly instead of spending months cleaning, documenting, and modeling even a small data area just for a POC.

Emma is a Data Analyst. She needs to generate documentations and semantic models so she could spend more time delivering insights instead of manually documenting tables, joins, and metrics.

Sophia is an Analyst who uses LLMs. She wants to provide Gemini or ChatGPT with the right semantic context so she could ask business questions and run analysis in natural language with accurate, governed results.

David is a Data Engineer. He wants to export semantic models into Snowflake Cortex so he could give his business users access to governed, consistent data models directly in their analytics workflows.

Michael is a Business Analyst. He wants to query a dedicated semantic space for Finance so he could ask natural language questions and get answers without waiting on the analytics team.

Sophia is a Head of Analytics. She wants to ensure that metrics and definitions are consistent across the organization so she could increase trust in analytics and reduce reporting conflicts between teams.

Olivia is a Data Leader. She wants to ensure her analysts always work within semantic models tied to business use cases so she could maintain governance, enforce best practices, and make sure analytics outputs are trustworthy.

The feature spec also includes evidence supporting the above content (from hundreds of customer conversations) as well as details on how Soild’s capabilities will address this customer need.

We’re on the journey to save data and analytics team 70%-80% of the time the would have needed to invest in generating a semantic layer, and exporting semantic models, while also drastically driving up accuracy. If you’re interested in seeing this in action, reach out.